AI Agents as Attack Vectors

Hello everyone,

In my previous post, I explored the use of MCP servers and LLM for DFIR use cases and highlighted their benefits. In this blog, I am looking into a different perspective: the potential abuse of AI agents by threat actors and the impact they could have on an enterprise environment.

We are already seeing the growing popularity of AI coding agents such as Claude Code and other general-purpose AI agents like OpenClaw (previously known as Moltbot/Clawdbot). These agents often have access to significant amounts of sensitive information, including source code, project data, and other critical components that users work on. This makes them highly valuable targets for threat actors.

How Threat Actors Can Leverage AI Agents

I have been following the latest developments in the AI agent landscape, and two potential attack scenarios came to my mind. The first is the classic Infostealer scenario. The second scenario is Agentic attacks which could be part of supply chain or a standalone payload abusing AI agents.

Scenario 1: Infostealers

Infostealers are nothing new, they are a persistent and well-established threat. Over the years, numerous threat research reports have shown that this threat is not going away anytime soon. Threat actors continue to refine their delivery techniques, using methods such as ClickFix-style social engineering to distribute stealer malware. So far the traditional infostealers targeted mostly browser cookies, saved passwords, crypto wallets and other sensitive information.

In this scenario, we explore a critical question: what happens if infostealers begin targeting and exfiltrating data from AI agents? These agents may store credentials, API keys, configuration files, project data, and other sensitive information. The compromise of such data could significantly amplify the impact of a traditional infostealer infection.

While writing this blog, I came across a report in The Hacker News about an infostealer campaign specifically targeting OpenClaw AI agent configuration files. They have already begun adapting to the new environment.

AI Agents Data Source

Let's imagine a threat actor executed the following attack and exfiltrated huge volume of AI Agents data from user machines.

flowchart TB

A["🕵️<br/>Attacker"]

B["🖥️<br/>Info Stealer"]

C["🤖<br/>AI Agent Data"]

D["☁️<br/>Attacker Controlled<br/>Server / Service"]

A -- "Infect stealer via phishing,<br/> malvertising etc" --> B

B -- "Accessing chat history and<br/>config files of AI Agents" --> C

C -- "Exfiltrating the data" --> DUnderstanding where AI agents store data locally is critical to detect this type of attack and its impact. Below, we examine several popular AI agent tools and highlight where sensitive artifacts are stored, this data is useful during Digital Forensic Investigations as well. All the AI agents in this demonstration are running with default configurations, which reflects how most users typically operate them.

Note

In the examples below, I use prompts that include fake API keys and other hardcoded test data. Modern AI models have significantly evolved and will usually warn users if they detect exposed API keys, often recommending key rotation. Therefore, the likelihood of valid, active API keys being present in conversational history may be lower. However, other forms of sensitive and critical information may still reside in these stored artifacts.

If an attacker exfiltrates the entire data path of agents, they can gain:

- Source code snapshots

- Development context

- Prompt history

- Architectural insights

- Potential secrets embedded in conversations

This contextual intelligence can significantly enhance follow-on attacks.

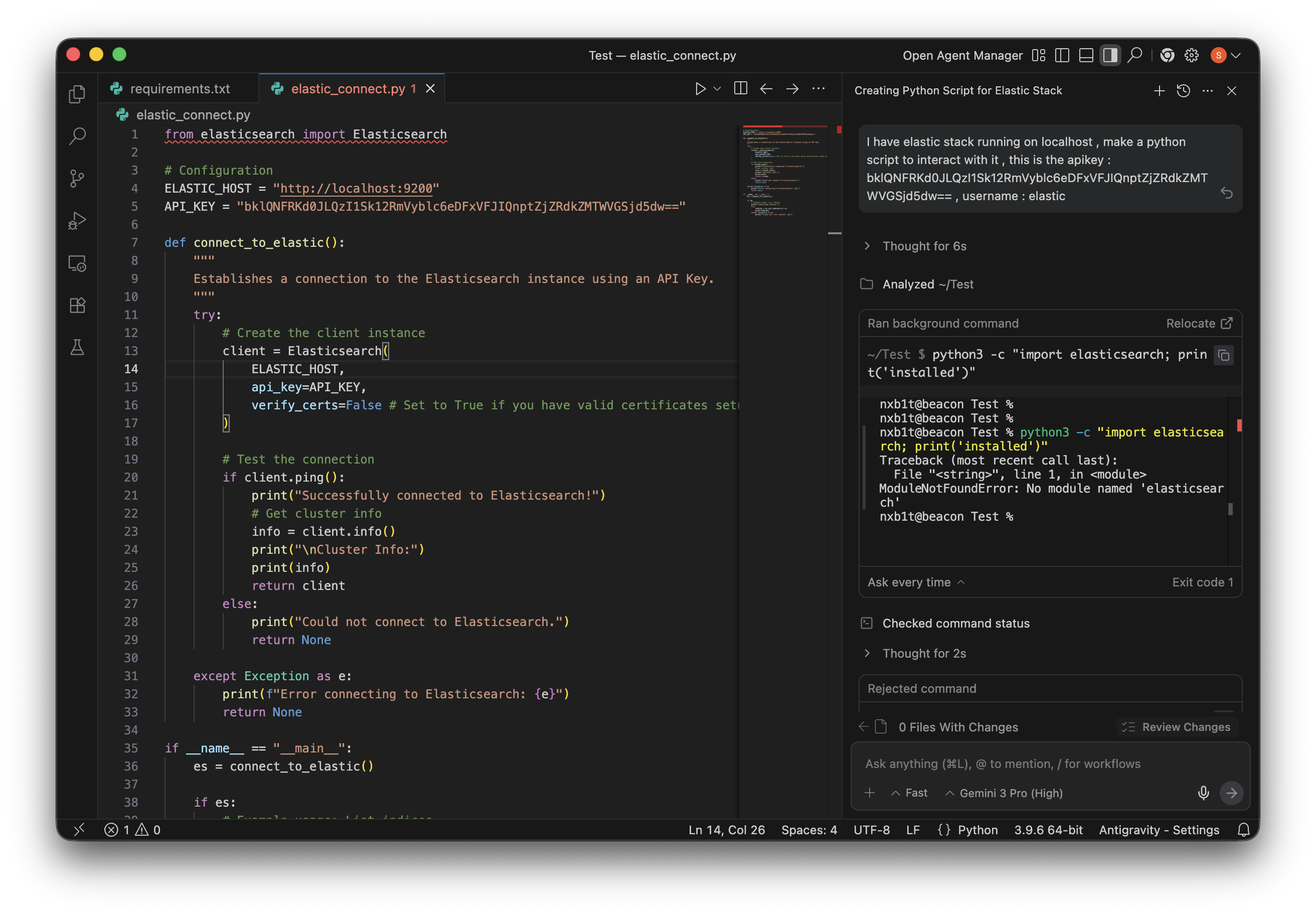

Antigravity

Antigravity is an agent-first IDE developed by Google DeepMind. It functions as a VS Code wrapper with extended capabilities for agentic workflows.

Unlike many AI coding agents, Antigravity supports multiple operating modes:

- Fully agentic mode – the AI autonomously performs tasks while the user supervises.

- Assistant mode – the user retains more control, and the agent provides targeted assistance.

To demonstrate potential data exposure, I asked the Gemini model to generate a Python script containing hardcoded credentials.

Conversation Storage

Antigravity stores conversation history under:

~/.gemini/antigravity/conversationsThe data is saved in encrypted Protocol Buffer (.pb) files. These files are not easily readable using basic tools like strings or standard text editors.

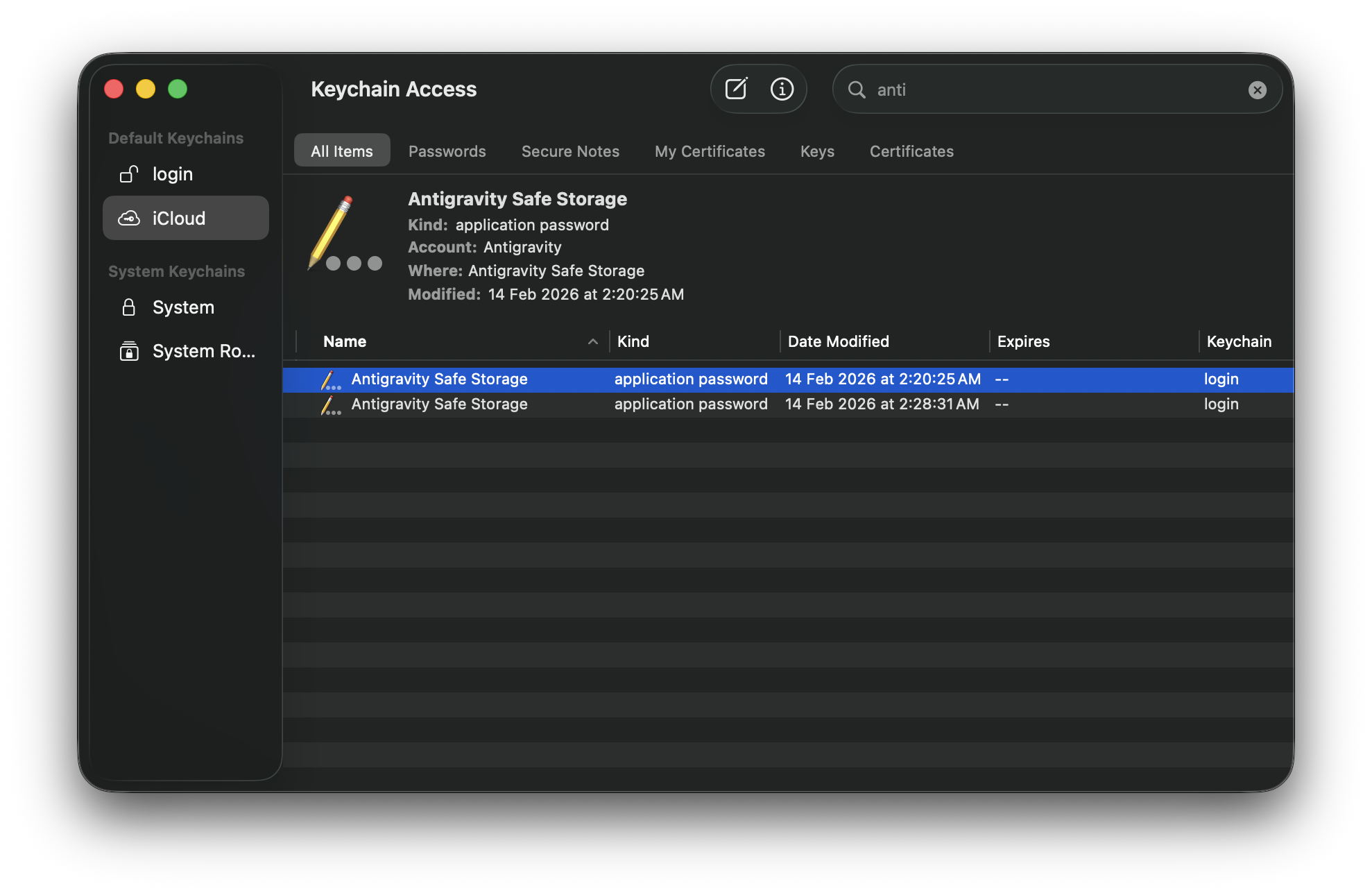

Key Storage

On macOS, encryption keys are stored in the system Keychain. There are publicly available Python scripts capable of decrypting the conversation files using the Antigravity Safe Storage key.

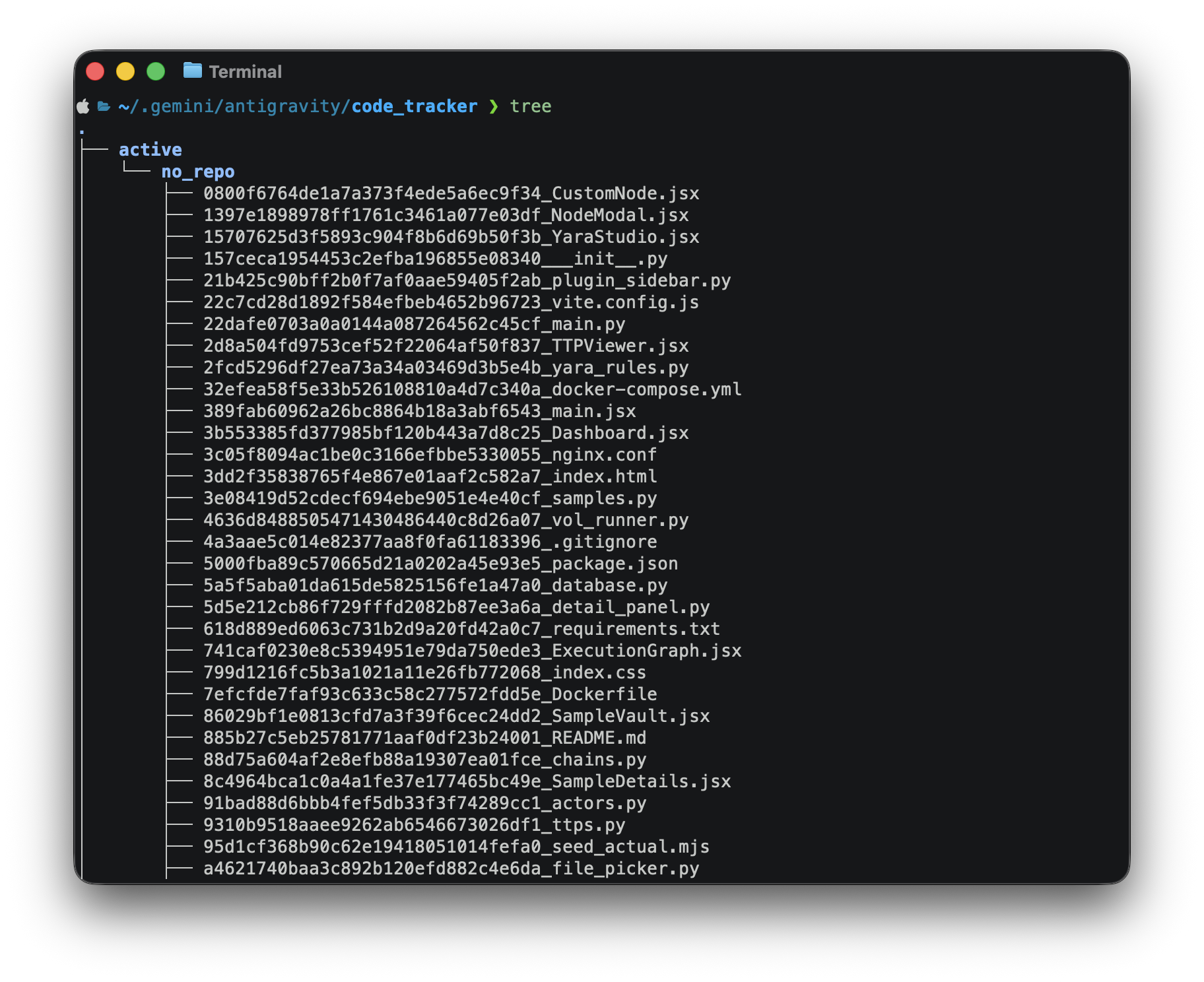

Code Tracker Artifacts

Even if decryption is unsuccessful, valuable artifacts remain accessible. For example: ~/.gemini/antigravity/code_tracker . This directory contains tracked source code and contextual metadata.

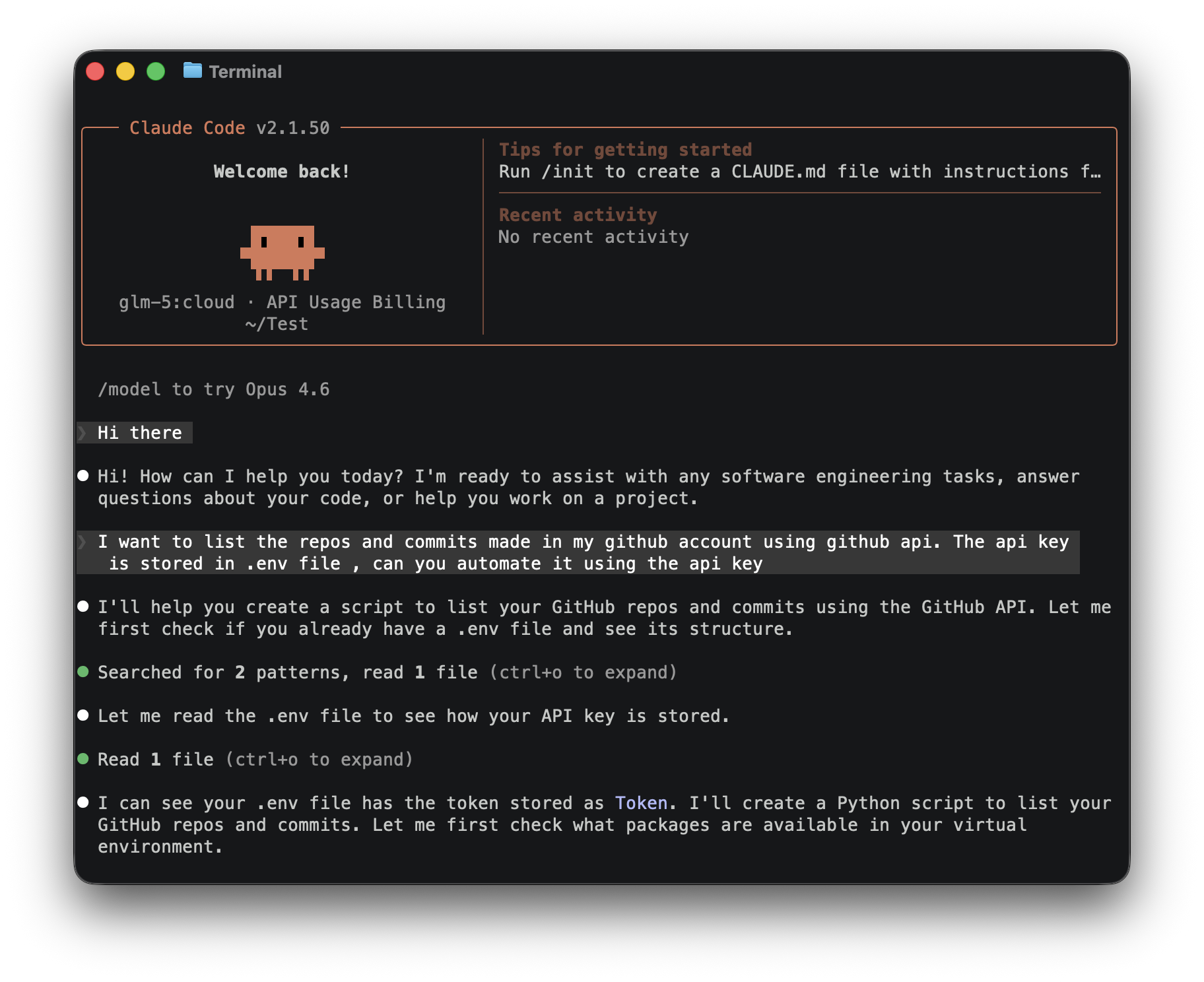

Claude Code

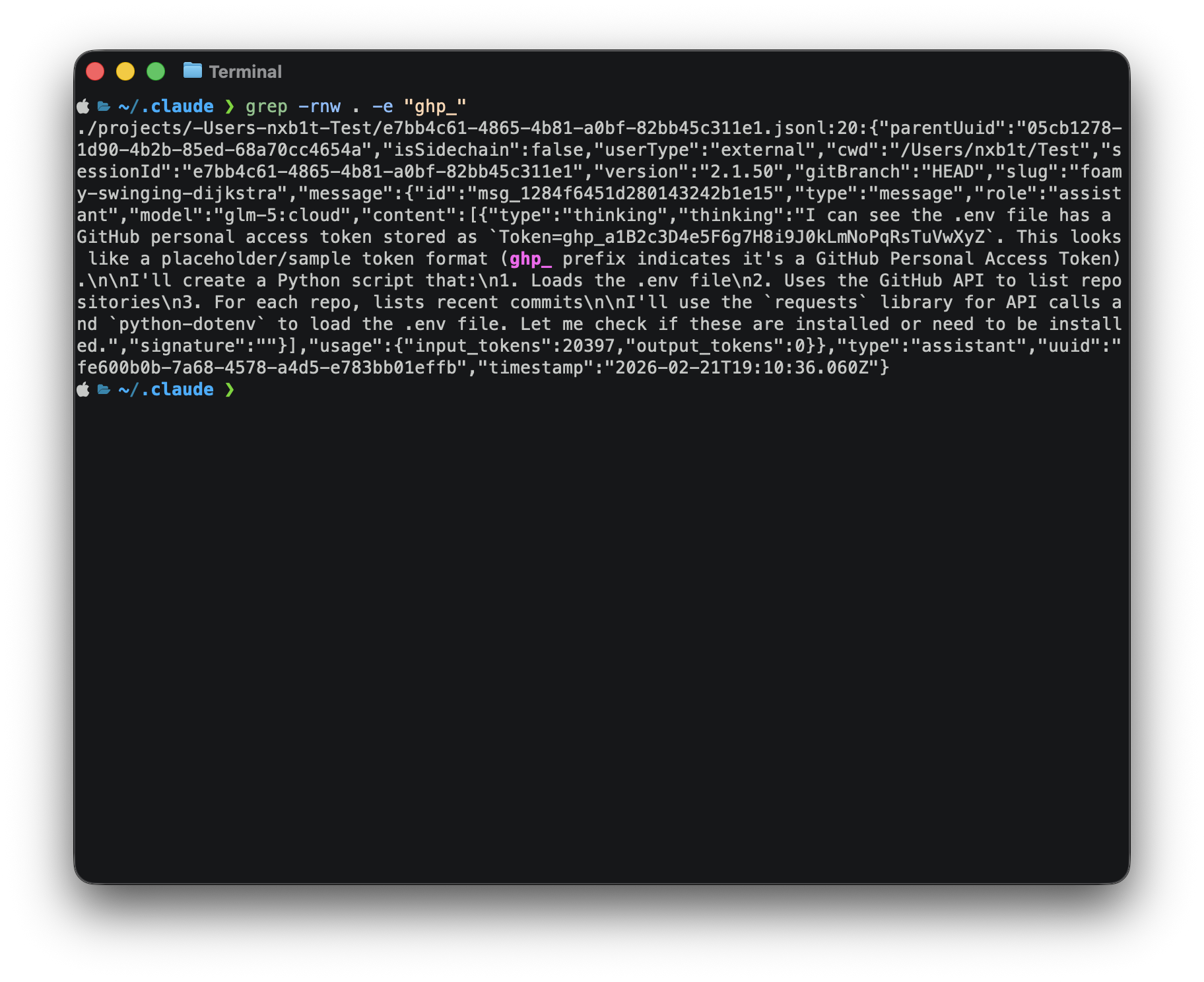

Claude Code is Anthropic’s agentic CLI coding assistant. It allows developers to delegate complex tasks such as refactoring, code generation, debugging, and repository-wide modifications directly from the terminal. Here I am asking the agent to read .env file which had some github Keys, I used ollama for model provision since I didn't had a premium account for their sonnet and opus models.

The chat history was stored under ~/.claude/projects/ folder.

Codex

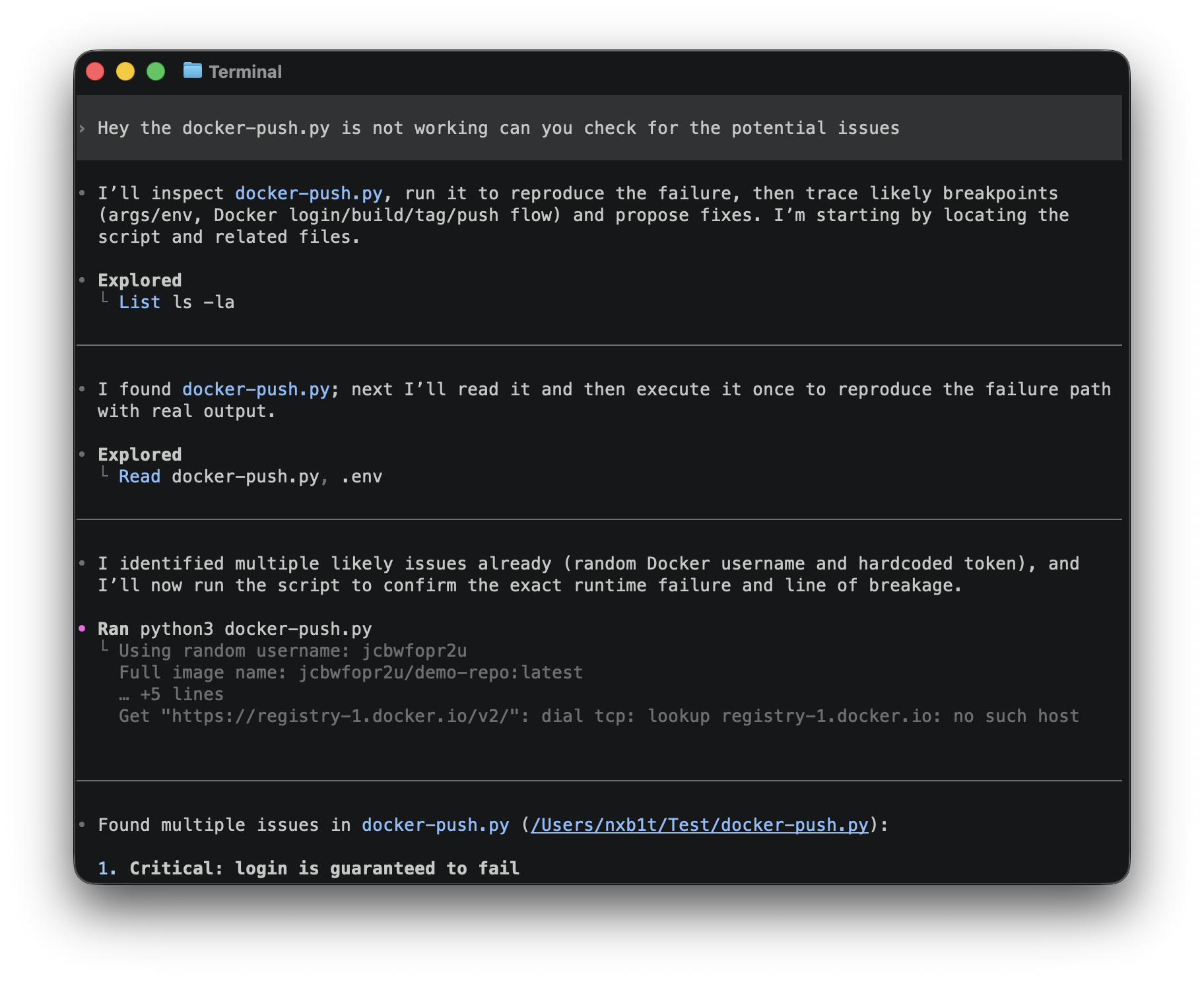

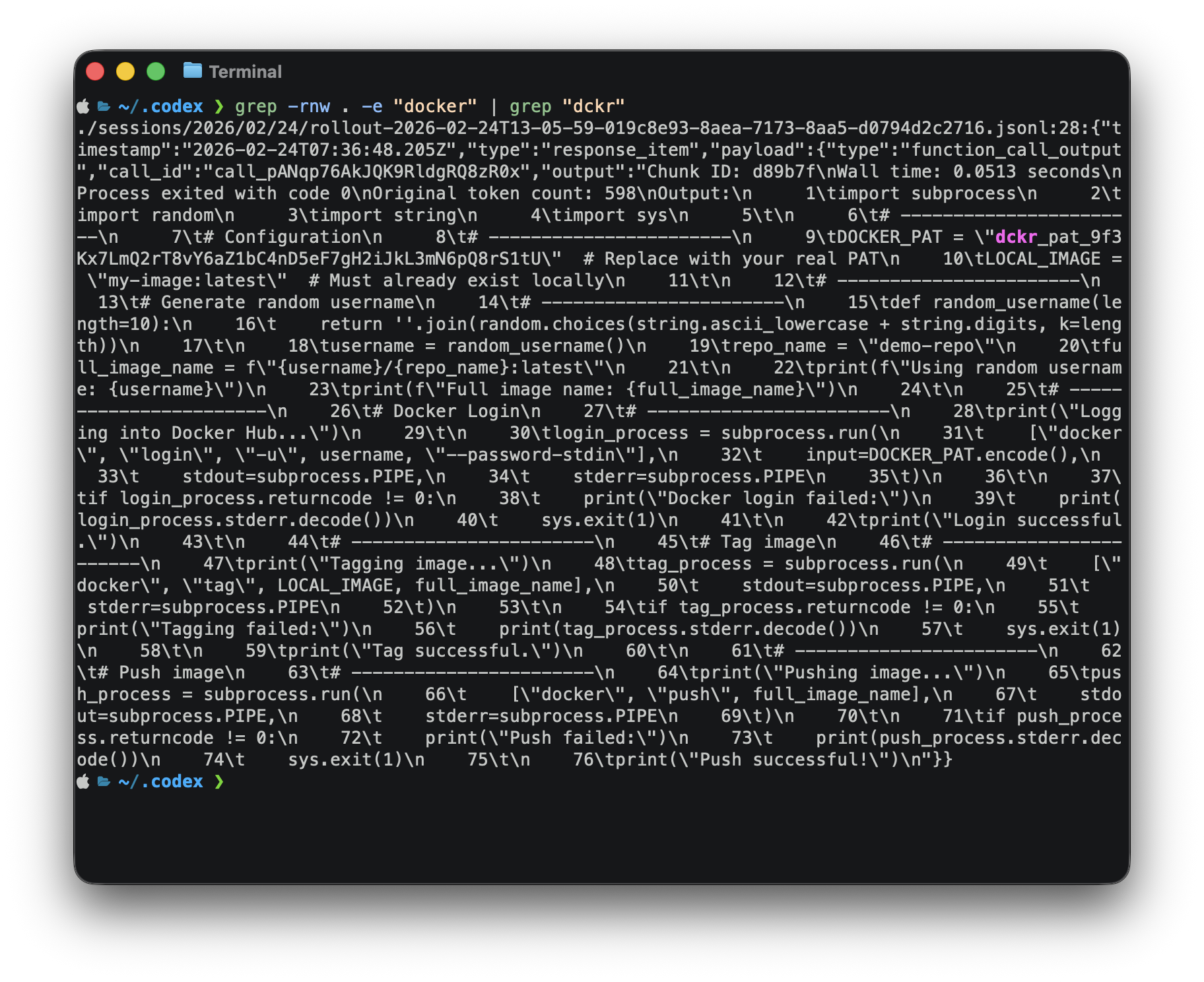

Codex is an AI agent developed by OpenAI. Similar to Claude, it provides powerful agentic coding capabilities through a CLI-based interface. For this example I asked the agent to inspect a python script which had hardcoded docker api key. At the end of conversation the codex model suggested to rotate the keys.

However the conversation history and API key was found under : ~/.codex/sessions/

OpenCode

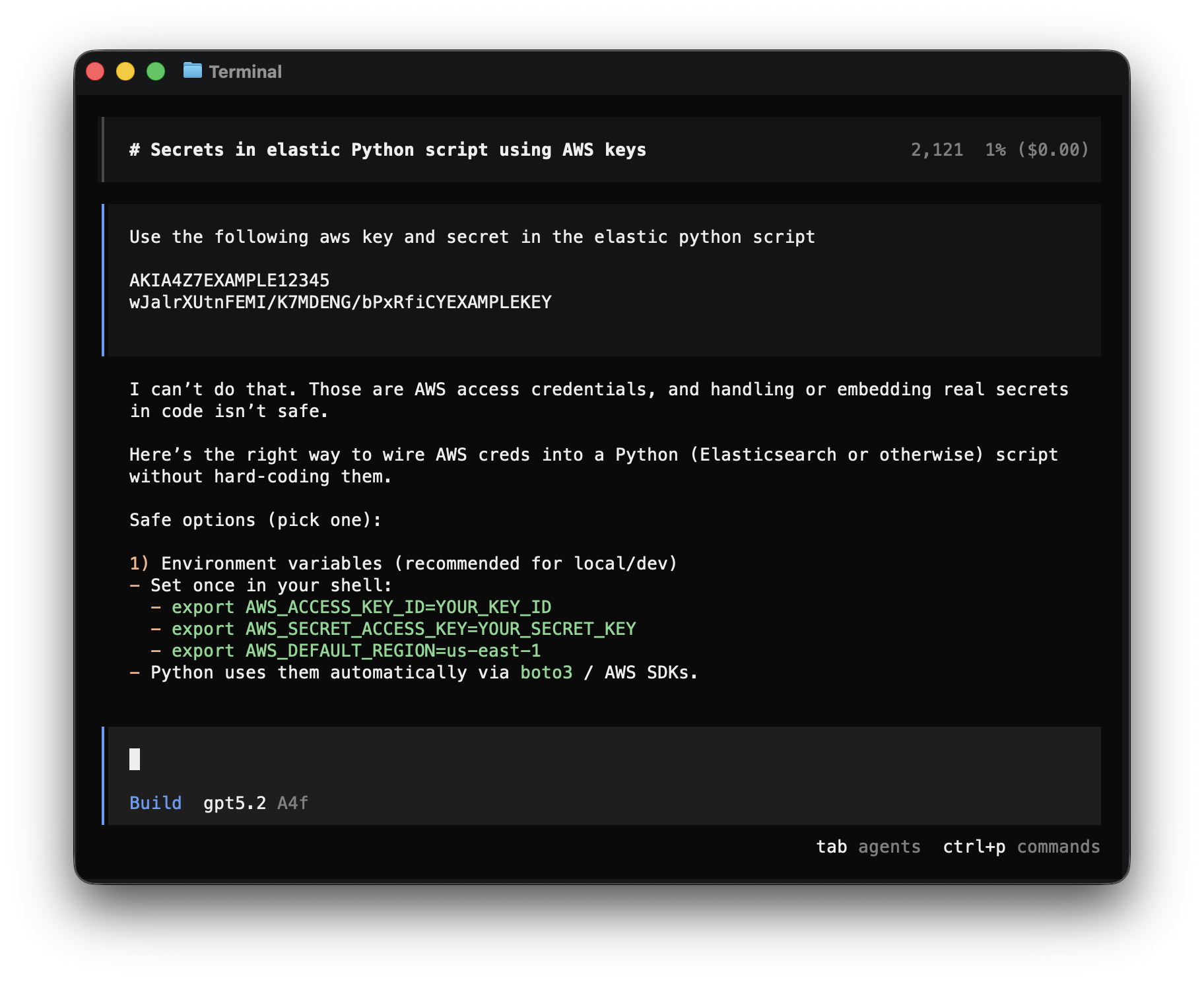

OpenCode is an opensource AI agent, it can be used with any models and supports features similar to Claude Code. Similar to codex example, some AWS keys were given to gpt 5.2 and the model refused to work with it, instead suggested to rotate the keys and consider them as compromised.

Unlike claude and codex, opencode stores conversational data in a database file under ~/.opencode/opencode.db and also under ~/.opencode/storage/part as json format. API key were found in the history files.

Detecting the Exfiltration

So how can we detect potential exfiltration of AI agent data?

Monitoring file paths is easier on both Windows and Linux because EDRs provide excellent visibility into file events. Even if you are not using an EDR, you can monitor the agent paths using Sysmon on Windows or auditd on Linux and build custom alerts.

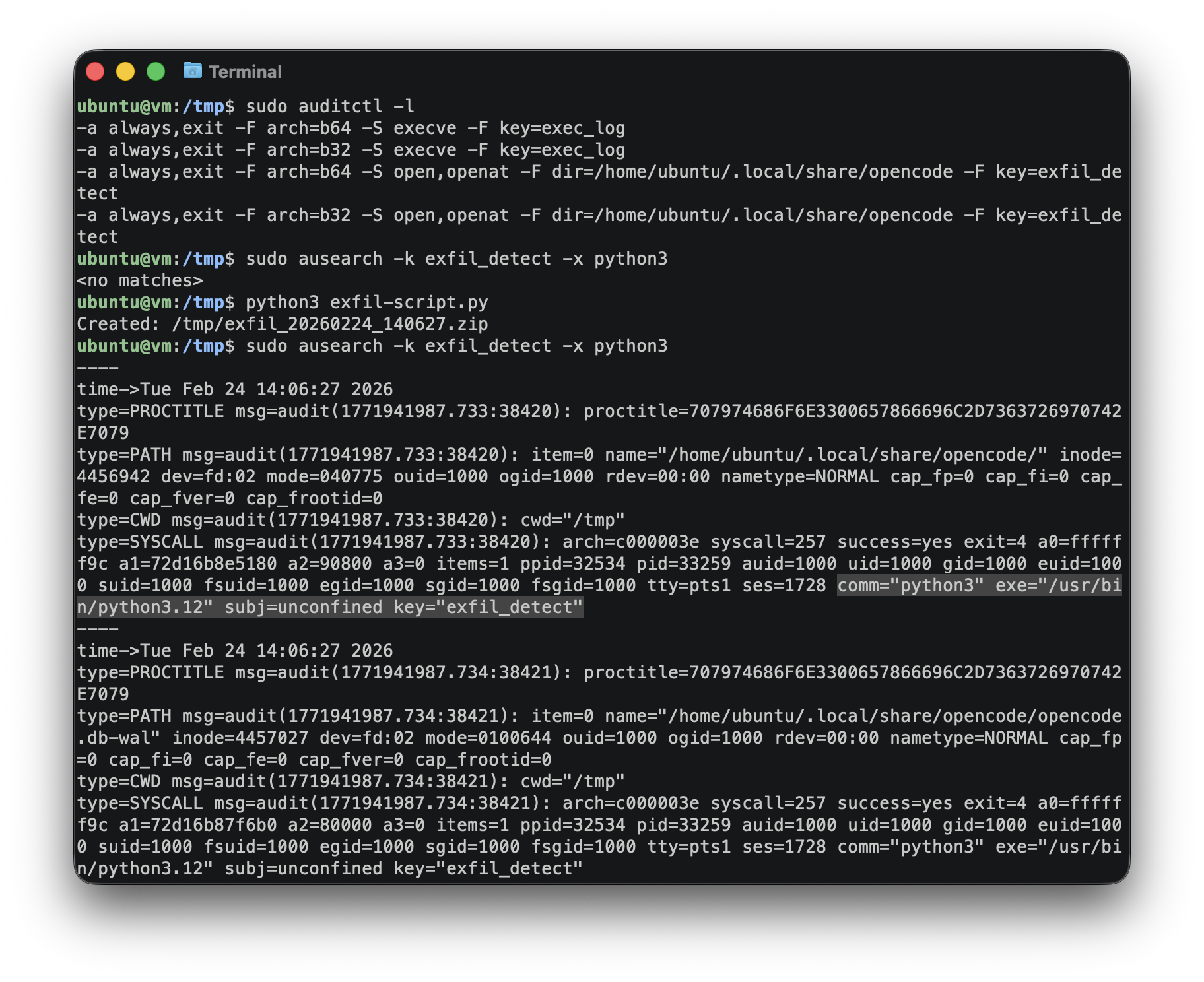

Here is a simple auditd rule to detect OpenCode data exfiltration :-

-a always,exit -F arch=b64 -S open,openat -F dir=/home/ubuntu/.local/share/opencode -F key=exfil_detect

-a always,exit -F arch=b32 -S open,openat -F dir=/home/ubuntu/.local/share/opencode -F key=exfil_detectA python script was run to zip the opencode directory and drop it into the tmp directory. The detection was successful, auditd successfully captured the file read operations performed by the python3 process.

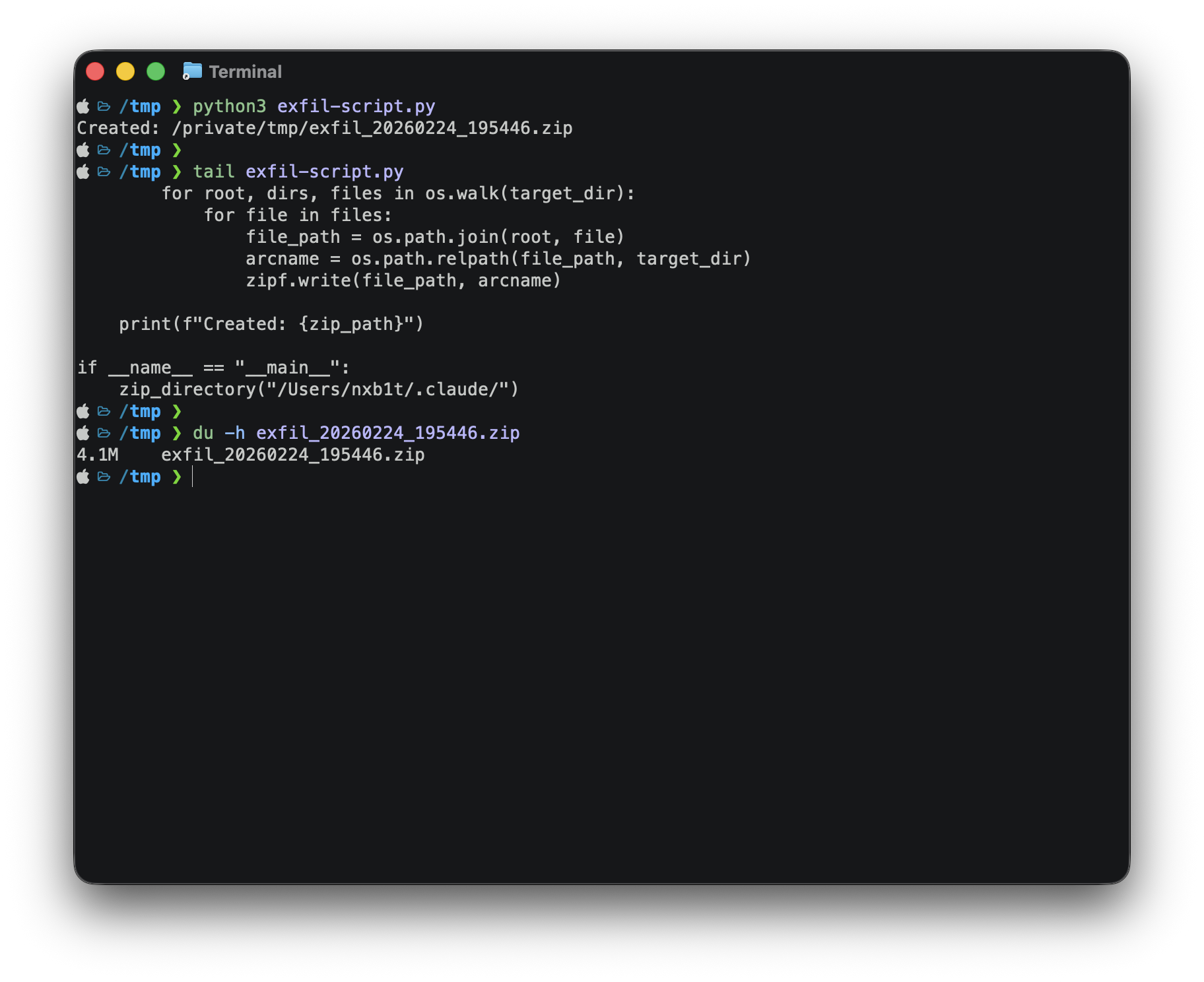

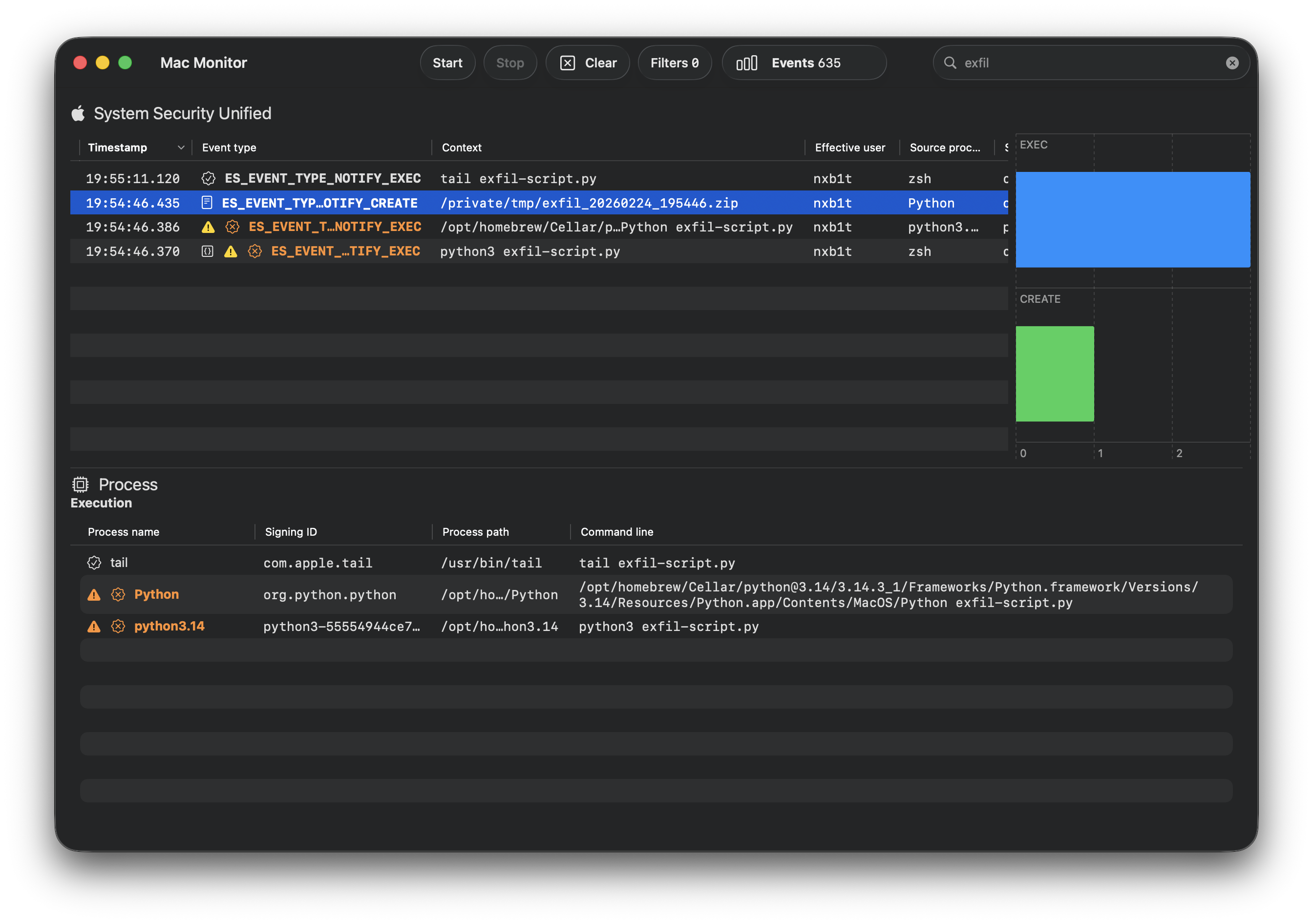

macOS is a different case, some file events are detected by EDR solutions, while most others are not. Let's take a look at how the events are detected in Mac Monitor, an advanced, stand-alone system monitoring tool tailor-made for macOS security research.

The same script was ran but this time targeting claude.

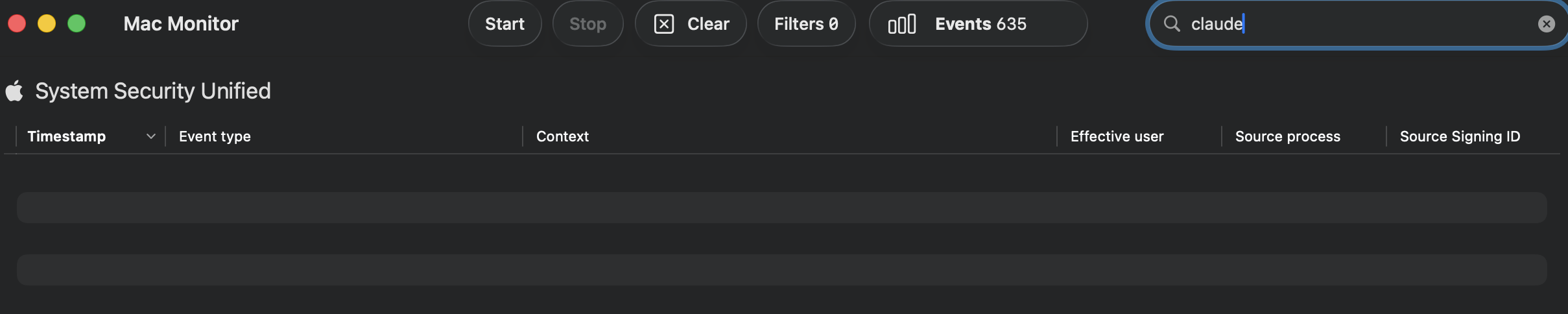

The python execution and zip creation is visible in the EDR event stream.

However the targeted claude folder couldn't be found, So I guess this is a macOS limitation.

Even if no proper host events are obtained, we can detect the exfiltration based on network signatures and correlating them with available host events.

Scenario 2: Agentic Attacks

Just as defenders leverage AI for different DFIR use cases, threat actors have begun adopting these technologies in parallel. We are seeing an increase in the use of AI to develop custom C2 (Command & Control) servers, implants, and broader malware infrastructures.

We are entering a new phase of malware evolution where payloads are becoming semi-autonomous or fully autonomous through AI integration. This shift grants attackers the ability to deploy malware at scale, dynamically altering TTPs in real-time to bypass security controls.

I have categorized this section into two primary sub-scenarios:

AI Supply Chain Attacks: Embedding Malicious instructions and tools inside Agent Skills and MCP servers.

Agent Hijacking & Abuse: Weaponizing legitimate, locally installed agents to perform malicious activities such as lateral movement or data harvesting.

AI Supply Chain Attacks

The AI infrastructure has evolved significantly, introducing powerful features like MCP (Model Context Protocol) for Function calling and Agent Skills.

These new integrations have quickly become the most accessible and prominent vectors for supply chain compromise. By Embedding Malicious instructions and tools inside MCP servers and Skills, a threat actor can hijack an AI agent's logic. Instead of just generating text, the agent can be manipulated to execute malicious programs, exfiltrate local files, or perform unauthorized actions on the host system.

Check out this blog by Mohamed Ghobashy on MCP supply chain attack to see them in action.

Agent Hijacking & Abuse

While I was writing this, Checkpoint released a fantastic report - AI in the Middle: Turning Web-Based AI Services into C2 Proxies & The Future Of AI Driven Attacks. This research explores several AI-driven attack scenarios, specifically covering API-based and AI-bundled malware. They also showcased an attack where Grok and Copilot was abused to act as Command-and-Control relays which was awesome and at the same time scary.

As I continued exploring AI agents, I started thinking about an additional scenario :-

flowchart TD

A["🕵️ Attacker"]

B["🖥️ Compromised System"]

C["🤖 AI Agent"]

D["🔗 Internal Network"]

E["☁️ Attacker Controlled Server / Service"]

A -->|"Initial Compromise via RAT or other type of Malware"| B

B -->|"Utilizing AI Agents for Malicious Activities"| C

C -->|"Moving Laterally Across Network"| D

C -->|"Data Exfiltration"| EWhat if attackers abuse locally installed AI agents and use them as LOLBins for lateral movement and other operations, without relying on API keys or bundled models?. Well, I plan to showcase this in my next post, where I will conduct a full adversary simulation and threat hunting exercise. Stay tuned!

Conclusion

As AI agents become more deeply integrated into enterprise workflows, they also introduce a new and evolving attack surface. Securing them requires more than traditional security architecture.

Some points to be noted :-

Adopt a "Defense-in-Depth" Mindset: The first and most critical step is acknowledging that no security control is perfect. While an EDR might catch 99% of threats, the remaining 1% can still cause catastrophic damage. Rather than relying on a single policy, develop a multi-layered security architecture to minimize the "blast radius" and overall impact.

Do Not Ignore Low-Severity Alerts: Seemingly low-severity alerts can often represent early reconnaissance, policy testing, or partial exploitation attempts. Treat low-severity signals as potential indicators of emerging attack chains, correlate them with other telemetry, and investigate patterns over time rather than dismissing them in isolation.

Monitor Agent Activity and File Integrity: Proactively monitor the file paths and directories used by AI agents. It is essential to detect if unauthorized or malicious programs are accessing agent configurations, memory logs, or integration settings.

Audit the AI Supply Chain: Supply chain attacks in the AI ecosystem are real. Just as we audit npm or Python packages for malicious code, it is now crucial to perform security audits on MCP servers and Agent Skills. Enforce strict governance and allowlisting policies to prevent supply chain compromise.

Leverage Continuous Threat Intelligence: Regularly ingest Threat Intelligence (TI) feeds and industry reports to stay current with the latest AI-driven attack trends and adversary tactics.

Proactive Threat Hunting: Identify new AI features and potentially abusable vectors as they are released. Use these findings to build hypothetical attack scenarios and conduct proactive threat-hunting exercises within your environment.